Meta Survey Signals Safety Concerns for Teen Instagram Users

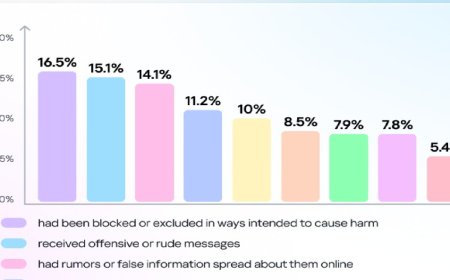

An internal survey by Meta Platforms has revealed concerning findings. One in five Instagram users aged 13 to 15 reported seeing “unwanted nude or sexual images” on the platform. The information emerged from documents filed in a recent lawsuit in a United States court, according to Reuters.

The documents were made public last Friday (February 20) as part of an ongoing case in a federal court in California, United States. They include excerpts from the March 2025 deposition of Adam Mosseri, the head of Instagram.

Meta spokesperson Andy Stone said the statistic was taken from a 2021 survey conducted among Instagram users, not from a review of posts.

The court filings further revealed that in the same survey, about 8 percent of users aged 13 to 15 said they had seen “someone commit suicide or threaten to do so” on Instagram.

Another document dated January 20, 2021, disclosed in the same case shows that a Meta researcher recommended focusing on teenage users. According to the researcher, teenagers act as a “catalyst” for their families, influencing how their younger siblings and parents use apps, which could help attract and retain new users.

In his deposition, Mosseri stated that most sexual images are exchanged between users through private messages. He noted that reviewing such content requires careful consideration of user privacy. “Many people do not want us to read their messages,” he said.

It is noteworthy that Meta recently announced new policies aimed at enhancing safety for teenage users, set to take effect by the end of 2025. The company said it will remove images and videos containing nudity and explicit sexual activity, with exceptions for medical and educational content.

DBTech/BMTO/OR