Deepfakes and Democracy: The New Election Security Challenge

Deepfakes are no longer limited to visual trickery; they have evolved into a comprehensive generative media ecosystem. Modern deepfake systems leverage diffusion models, transformer-based architectures, and advanced voice synthesis to achieve a high level of realism. With just a few minutes of audio samples, neural voice cloning is now possible. Using a small number of clean images (few-shot learning), identity-based face swapping or face reenactment videos can be created with ease. Open-source tools such as FaceSwap and DeepFaceLab, alongside commercial platforms offering advanced text-to-video and voice generation services, have effectively democratized deepfake production. Barriers to entry have fallen, while scalability has increased.

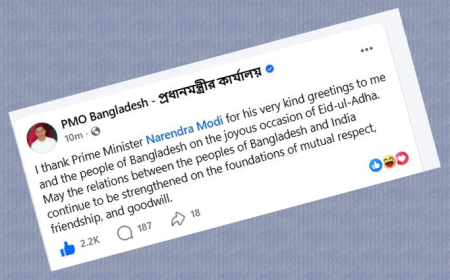

In the electoral context, the risk extends beyond the mere creation of false content; it becomes part of a coordinated information-flow strategy. Planned deepfake campaigns typically operate in several stages: (1) crafting misleading messages aimed at specific voter groups, (2) rapidly amplifying emotionally charged content to drive virality, and (3) eroding trust in electoral processes and political candidates. Here, misinformation and disinformation operate in parallel. Misinformation often spreads unintentionally and gains organic reach, while disinformation is strategic—deliberately created and disseminated at carefully chosen moments. Deepfakes can merge these two streams to influence election-related narratives, and even if they do not directly alter election results, they can significantly distort the decision-making environment.

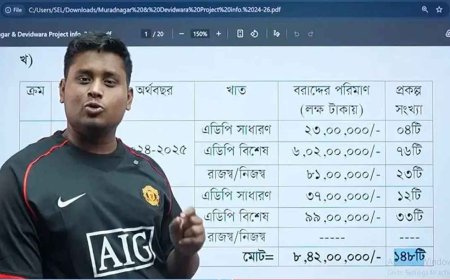

This risk is particularly relevant within Bangladesh’s information ecosystem. A combination of social media–driven public opinion, limited digital literacy, and a culture of rapid sharing can turn deepfakes into a high-impact, low-cost attack vector. The final phase of an election—especially the 24 to 48 hours before voting—creates what can be described as a “late-stage narrative injection window.” During this period, deliberately misleading content leaves very little time for verification, removal, or institutional clarification, resulting in a disproportionately high impact. Provocative statements falsely attributed to candidates, fake announcements about election postponements, or fabricated videos of administrative decisions are not merely misleading; they can generate social unrest and even market instability.

From a technical standpoint, detection remains challenging. AI-based detection models can identify anomalies through frequency analysis, facial landmark inconsistencies, blink patterns, and irregularities in voice spectral features. Systems such as Deepware Scanner or Reality Defender can flag potentially AI-generated content, while tools like InVID assist with frame-by-frame analysis. However, as generation technologies evolve rapidly, detection technologies face continuous pressure. This dynamic has effectively become an arms race.

In this context, user behavior represents a critical layer of cyber defense. Avoiding the sharing of suspicious videos or audio without verification, checking sources and timestamps, and using reverse image search are essential first-line defenses. Beyond this, institutional preparedness is necessary: rapid response protocols, immediate clarification through official channels, and election-focused information integrity strategies.

In the age of deepfakes, election security is no longer solely about protecting servers, networks, or databases; it is a battle to preserve the integrity and consistency of information itself. Even if infrastructure remains secure, the digital distortion of public opinion through system abuse represents a new and serious threat to democracy. The question now is not only about technological capability, but whether we are prepared—through policy, technology, and public awareness—to recognize this information war as a real threat and respond accordingly.

Author: Ashfaque Safal, Information Technology Specialist; Joint General Secretary, Bangladesh System Administrators Forum

Disclaimer: The views expressed in the Opinion section are solely those of the author. Digital Bangla Media holds no responsibility for these opinions. As a reflection of pluralism, this article has been published without editorial modification. Any offense or agitation caused is entirely a matter of personal interpretation.