Shadow AI Risks Rise as Sophos Flags Cybersecurity Strain

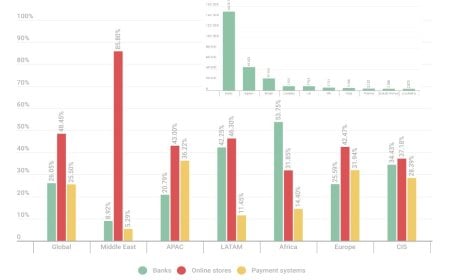

Global cybersecurity firm Sophos has revealed in its latest survey that 46 percent of organizations worldwide are using unauthorized AI tools. At the same time, 72 percent of companies report having policies on AI usage, while 12 percent admit they are unaware if Shadow AI applications exist within their organizations. The study also found that, due to work pressure and fatigue, employees lose an average of 4.6 hours per week—an increase of 12 percent compared to 2024.

According to the findings, 83 percent of organizations follow AI usage policies, but only 56 percent believe these measures actually improve security.

The report, titled The Future of Cybersecurity in Asia Pacific and Japan, was published in collaboration with Tech Research Asia. Now in its fifth edition, the study highlights rising levels of work-related stress across the Asia-Pacific and Japan (APJ) region. It noted that 86 percent of surveyed organizations reported high levels of stress in cybersecurity roles, up from 85 percent in 2024. Key causes include cyber threats, resource shortages, and complex processes that contribute to employee burnout.

The 2025 report also points to the dual impact of artificial intelligence (AI) on cybersecurity. On one hand, AI tools help streamline tasks, while on the other, their use often complicates cybersecurity operations.

The study emphasizes that cybersecurity-related stress is not merely a technical challenge but also a business issue, as it affects workforce productivity, staff retention, and the ability to respond to cyberattacks effectively.

Aaron Bugal, Field Chief Information Security Officer for Sophos in the APJ region, said: "The combination of rising cyberattacks, regulatory demands, and limited resources is making cybersecurity unsustainable. This year, stress and burnout in cybersecurity roles have surpassed operational workloads. Planned use of AI tools could improve efficiency and accelerate security operations, but Shadow AI—that is, the use of unapproved and uncontrolled AI tools within organizations—is introducing new risks."

He further warned that organizations must remain vigilant against threats such as phishing emails and understand how sensitive data is being shared and utilized through AI tools. "It is equally important to know the rules and the extent of AI usage," he added.