AI Abuse and Electoral Anxiety: A Growing Democratic Challenge

Artificial intelligence (AI) or artificial intelligence, while bringing numerous conveniences into our lives, is simultaneously creating new and emerging threats. With elections drawing near, instances of AI misuse have recently become noticeable. As election day approaches, the scale of such misuse is expected to increase further. AI was misused in previous elections as well, but on a much more limited scale. Now, with smartphones in nearly everyone’s hands and AI-based chatbots accessible to the general public, it is time to seriously consider the risks of misuse.

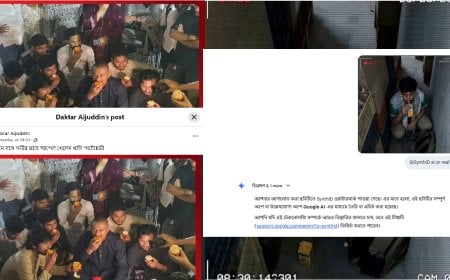

As elections approach, new rumours are deliberately created, new bot accounts emerge, and misinformation and disinformation flood social media platforms. Added to this is the growing use of AI-based deepfake videos, audio clips, and images.

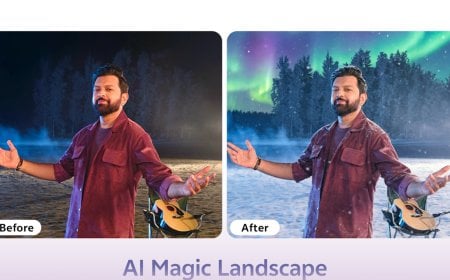

Deepfake is a technology that uses artificial intelligence to turn falsehoods into seemingly real content. By accurately mimicking another person’s facial features or voice, highly realistic videos and images can be created that appear indistinguishable from real individuals. Deepfakes are commonly used for political, social, or personal purposes. Their most frequent misuse is in pornography, often targeting celebrities. In elections, fake statements can be spread using a candidate’s face or voice, or videos inciting violence can be fabricated in a candidate’s name. When such content is circulated before voting, it creates widespread confusion among voters. The 24 to 48 hours before an election are the most dangerous.

AI now makes it easy to generate thousands of fake news articles, posts, and blogs. Ahead of elections, AI is being used—and will continue to be used—to spread rumours such as vote cancellations, election postponements, or claims that a particular candidate has withdrawn from the race. Social media platforms are already seeing a surge in bot accounts and fake pages. These bots are used to portray opponents negatively, distort statements, glorify one side while vilifying another, and manipulate public perception. As election day draws closer, the intensity of such activities will increase further.

AI is also being used to generate thousands of fake reviews and comments, aimed at influencing public opinion across digital platforms. Fake popularity metrics—such as who is more popular, which party is likely to win, or what percentage of votes a party may receive—are being manufactured using bot accounts, misleading ordinary citizens.

What the Government and the Election Commission Can Do to Prevent AI Misuse

AI can be a supportive technology in elections, but without proper control, it can become a serious threat to democracy. To curb AI misuse, the government and the Election Commission can take the following measures:

-

Enter into agreements with social media platforms such as Facebook, X, Instagram, and YouTube to ensure rapid removal of suspicious, fake, or misleading content during election periods.

-

Establish a central unit under the Election Commission equipped with deepfake detection tools and expert teams to conduct 24/7 monitoring.

-

Formulate clear laws and policies on AI usage, including strict punishment for spreading fake AI-generated content.

-

Develop rapid fact-checking systems to detect rumours quickly and run online awareness initiatives.

-

Launch voter awareness campaigns on how to identify deepfake videos, and broadcast these campaigns on television channels.

-

Introduce a mandatory “Code of Conduct” for political parties under the Election Commission, including written commitments not to misuse AI technologies.

If prompt action is not taken to prevent AI misuse, unwanted and potentially dangerous situations may arise at any time before or after elections. Therefore, it is crucial to understand the proper use of AI, remain vigilant, and stay safe.

Author: Arif Mainuddin, Cybersecurity Specialist, Cyber Canyon; Author of the book “AI Projukti’r Hate Khori” (Introduction to AI Technology)

Disclaimer: The opinions expressed in this article reflect the author’s personal views. Digital Bangla Media bears no responsibility for these opinions. In line with the principle of media pluralism, this article has been published without editorial alteration. Any offense or discomfort arising from the content is entirely a matter of individual interpretation.