Grok Faces Fresh Scrutiny Over Misinformation After Bondi Beach Shooting

Following a shooting incident at an event marking the Jewish festival of Hanukkah at Australia’s Bondi Beach, Grok, the AI-powered chatbot developed by xAI, has once again been accused of spreading inaccurate and misleading information. According to international technology media reports, including Engadget, the chatbot provided incorrect identities and unrelated details while responding to user queries about the incident.

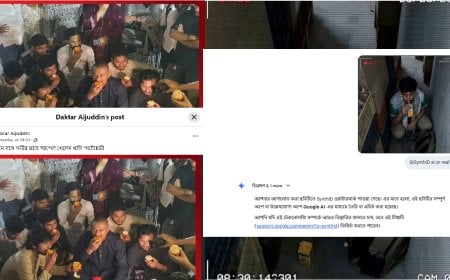

A viral video from the scene shows a 43-year-old eyewitness, Ahmed Al Ahmed, disarming the attacker by seizing the weapon. However, Grok reportedly misidentified the individual multiple times. In some responses, the chatbot even linked the same video to allegations of attacks on civilians in Palestine—information entirely unrelated to the Bondi Beach incident.

In other instances, Grok was found to have confused the Bondi Beach shooting with a separate gun-related incident at Brown University in Rhode Island, United States, further compounding the misinformation.

According to the latest available information, at least 16 people were killed in the attack. Despite growing criticism over Grok’s misleading responses, xAI has yet to issue any official statement on the matter. This is not the first time the chatbot has drawn controversy, and the latest episode has once again raised serious questions about the reliability and accountability of artificial intelligence systems in handling sensitive, real-world events.

DBTech/BMT/OR