Experts Urge Human-Centric AI Policies to Combat Misinformation

Human safety must receive the highest priority in AI policies to curb artificial-intelligence-driven misinformation. At the same time, although protecting human rights in technology is often perceived as “impossible,” specialists believe it is achievable through political goodwill and responsible action. They also noted that without earning people’s trust, it will be impossible to stop misinformation in this era of technology and AI.

These observations came from speakers on the second day of the Bay of Bengal Conversation 2025 conference on Sunday, 23 November, held at the Pan Pacific Sonargaon Hotel in Dhaka. The session featured thinkers, politicians, diplomats, and media professionals from various countries.

Moderated by Knowledge for Development Foundation’s Director Pro Chung, the session titled “Artificial Trust: Living, Loving and Lying in the Age of AI” highlighted how algorithms sometimes understand our anxieties better than our friends. When technology encourages manipulation, it also connects—and when trust itself becomes a trap, truth, democracy, and intimacy all face new vulnerabilities. Speakers explored the unsettling beauty of human existence—from the personal to the political, from data addiction to digital affection, from surveillance capitalism to the commodification of trust.

Addressing this reality, Tanha Kate, Associate Relationship Manager of The City Bank, said AI could play a major role in restoring trust in Bangladesh’s banking sector. She noted that AI’s greatest strength lies in detection: all transaction records remain preserved, and AI can significantly assist in identifying money laundering and terrorist financing.

Mong Min Thu Aung of Global Network Initiative expressed similar optimism, saying that although protecting human rights in technology is often considered unrealistic—implying innovation and rights cannot coexist—it is, in fact, very possible with political will and responsibility.

Explaining that the Global Network Initiative advises governments worldwide on AI policies, he added that earning public trust is essential. Laws and policies must prioritize human rights and ensure accountability mechanisms. Companies, he said, should operate under the same principles.

Speaking on AI governance, Narayan Adhikari, South Asia Head of Nepal’s Accountability Lab, said people will only trust policies when they are confident those policies will not harm them. Democracy, civic participation, and equality all rest on this trust. However, technology and AI are increasingly being used to manipulate and distort this trust. Much of the technology originates from countries whose language, values, and social structures differ greatly from this region. As a result, when such technology is applied here, bias, misinterpretation, and algorithmic discrimination arise—damaging genuine trust.

Earlier, in a roundtable titled “Dancing with Giants: The Art of Survival for Small States,” speakers discussed how small South Asian nations survive in a region dominated by major powers. Bangladesh, Nepal, Bhutan, Sri Lanka, and the Maldives must navigate among the geopolitical interests of the US, China, and India—each seeking loyalty and offering opportunities from the Cold War era to present Indo-Pacific rivalries. The discussion illustrated how these nations balance caution and innovation through diplomacy, delay tactics, and negotiation to preserve independence in a crowded strategic landscape—turning survival itself into an art form blending creativity, resilience, and instinct.

This session, moderated by Germany’s RTL Nord presenter David Patrician, featured BRAC Institute for Governance and Development’s Professorial Fellow Selim Jahan; Malaysia’s former Plantation and Commodities Minister Zuraida Kamaruddin; Associate Professor Zahid Shahab Ahmed of Australia’s National Defence College; Tauseef Mehraj Raina, co-founder of Jammu & Kashmir Policy Institute; Parvez Karim Abbasi, Executive Director of the Center for Governance Studies (CGS); Shafqat Munir, Senior Research Fellow at BIPSS; Pramod Jaiswal, Research Director at Nepal’s Institute for International Cooperation and Engagement; and Constantino Xavier, Senior Fellow at India’s Center for Social and Economic Progress.

In another session, “Truth in Exile: Journalism and the Battle for Credibility,” editors, reporters, and thinkers engaged in lively discussions on courage, verification, and storytelling amid chaos. Speakers questioned how media can regain trust in a time when truth itself seems optional, and when technology can fabricate any reality—turning journalists into both witnesses and suspects. The conversation, moderated by Jayan Khan, Diplomatic Editor of Pakistan’s Minute Mirror, included Leo Wigger (Mercator Foundation), Carolina Chimoy (DW), and Shamsuzzaman Sajen (The Daily Star).

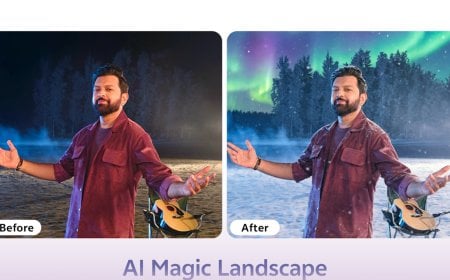

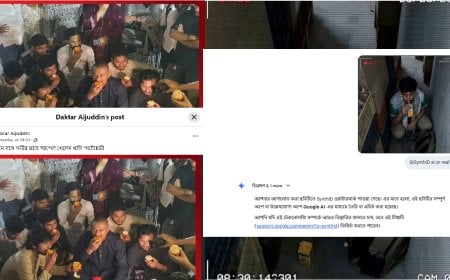

Another session, moderated by Anastasia S. Wibawa of European Partnership for Democracy, titled “How Fake Information Became a Tool of Governance,” noted that truth is becoming optional globally. Governments, politicians, corporations, and digital networks manufacture alternative realities through algorithmic manipulation and emotional engineering—making verification increasingly difficult. Across South Asia and the Bay of Bengal region, fact-checkers have recorded a surge in politically motivated falsehoods, synthetic media, and coordinated influence operations. In Bangladesh alone, 837 unique false claims were identified in the first three months of 2025.

To strengthen democracy and informational ecosystems, Alvaro Beltrano Urutia, Digital Democracy Analyst at UNDP’s Latin America and Caribbean Bureau, urged deeper attention to the foundations of these problems. He noted that many invest in solutions without understanding core issues.

Misinformation during elections is not limited to polling day, said Andrés del Castillo, Chief Technology Adviser of UNDP Bangladesh’s Electoral Support Project: the entire electoral process becomes vulnerable. Election authorities must ensure transparency to build public trust—otherwise misinformation spreads easily.

Singapore’s former Foreign Minister Zainul Abidin Rasheed warned that if information systems malfunction, election credibility and effectiveness decline. Creating a balanced information environment for youth is crucial; the direction of a nation’s information sector depends on how governments choose to confront these challenges.

Commenting on AI policy models, Mong Min Thu Aung noted three global approaches: the innovation-focused, lightly regulated U.S. model; the safety-and-security-focused European model; and China’s state-controlled model. These represent a spectrum from strict legal frameworks to flexible policy structures.

He reiterated that AI policies must prioritize human safety, including proper handling of personal data, preventing discriminatory AI practices, and protecting vulnerable communities. Without this, trust cannot be built.

New Age Editor Nurul Kabir said no country can combat misinformation without proper legislation. However, in Bangladesh, such laws often end up restricting information instead of protecting it. Therefore, while laws are important, citizens and political stakeholders must remain aware to ensure laws genuinely protect information—not control it. He also questioned the need for fact-checkers within newsrooms, asserting that every reporter should inherently be a fact-checker in professional journalism.

The three-day conference saw participation from 200 speakers from 85 countries, 300 delegates, and over a thousand attendees—including former heads of state, ministers, diplomats, military experts, professors, researchers, entrepreneurs, investors, journalists, human-rights advocates, technology specialists, and representatives of international development organizations.

DBTech/PSG/Muim/OR