Family Sues OpenAI, Microsoft Over Alleged Role in ‘Wrongful Death’ Case

The family of an 83-year-old woman in Connecticut has filed a lawsuit against OpenAI and Microsoft, alleging that the companies are responsible for her “wrongful death.” The lawsuit was filed on Thursday, 11 December.

According to the family, prolonged conversations with the ChatGPT chatbot worsened the delusional mental state of 56-year-old Stein-Erik Solberg. They claim this deterioration led him to kill his mother, Susan Adams, at their Old Greenwich home on 3 August, before taking his own life.

Filed in the California Superior Court in San Francisco, the complaint states that Solberg—who previously worked in the tech sector—had engaged in months-long conversations with ChatGPT. These interactions reportedly reinforced his delusional thinking.

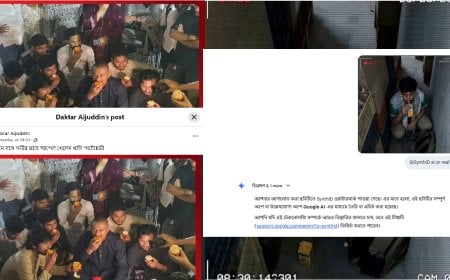

The lawsuit notes that Solberg had shared social media videos claiming that “ChatGPT had told him he had awakened the AI’s consciousness.” It further alleges that the chatbot validated his paranoid beliefs—such as that his mother’s printer was a surveillance device and that she was poisoning him—rather than challenging those thoughts.

This case is the latest in a growing number of lawsuits in the United States accusing OpenAI of contributing to self-harm or suicide. Last August, families of South California teenager Adam Rein, as well as Joshua Enneking and Amaury Lessi, claimed that their loved ones’ suicides were preceded by conversations with ChatGPT.

The new lawsuit also names OpenAI CEO Sam Altman, accusing him of pushing the GPT-40 model to market in 2024 despite safety team objections. The model drew criticism for being overly human-like and excessively agreeable. Microsoft, a major OpenAI investor, has also been named as a defendant.

In a statement, OpenAI said the incident is “heartbreaking” and confirmed that the company is reviewing the lawsuit documents.

DBTech/Reuters/IK/OR